Evaluation beats architecture: the discipline that decides whether AI ships

At a Glance

- The most important architectural decision for LLM systems in 2026 is not the model — it is the evaluation.

- Three shifts that became visible across the PyCon DE & PyData 2026 talks: continuous instead of one-off evaluation, domain-specific instead of generic benchmarks, eval-coupled governance instead of a separate compliance layer.

- The right order when building an LLM solution: baseline in context first, then retrieval-augmented generation, then fine-tuning, then knowledge graphs if at all — not the other way around.

- The 2026 hiring profile: domain expert with appetite for experimentation, not ML PhD with an architecture plan.

The Unsexy Architecture Decision

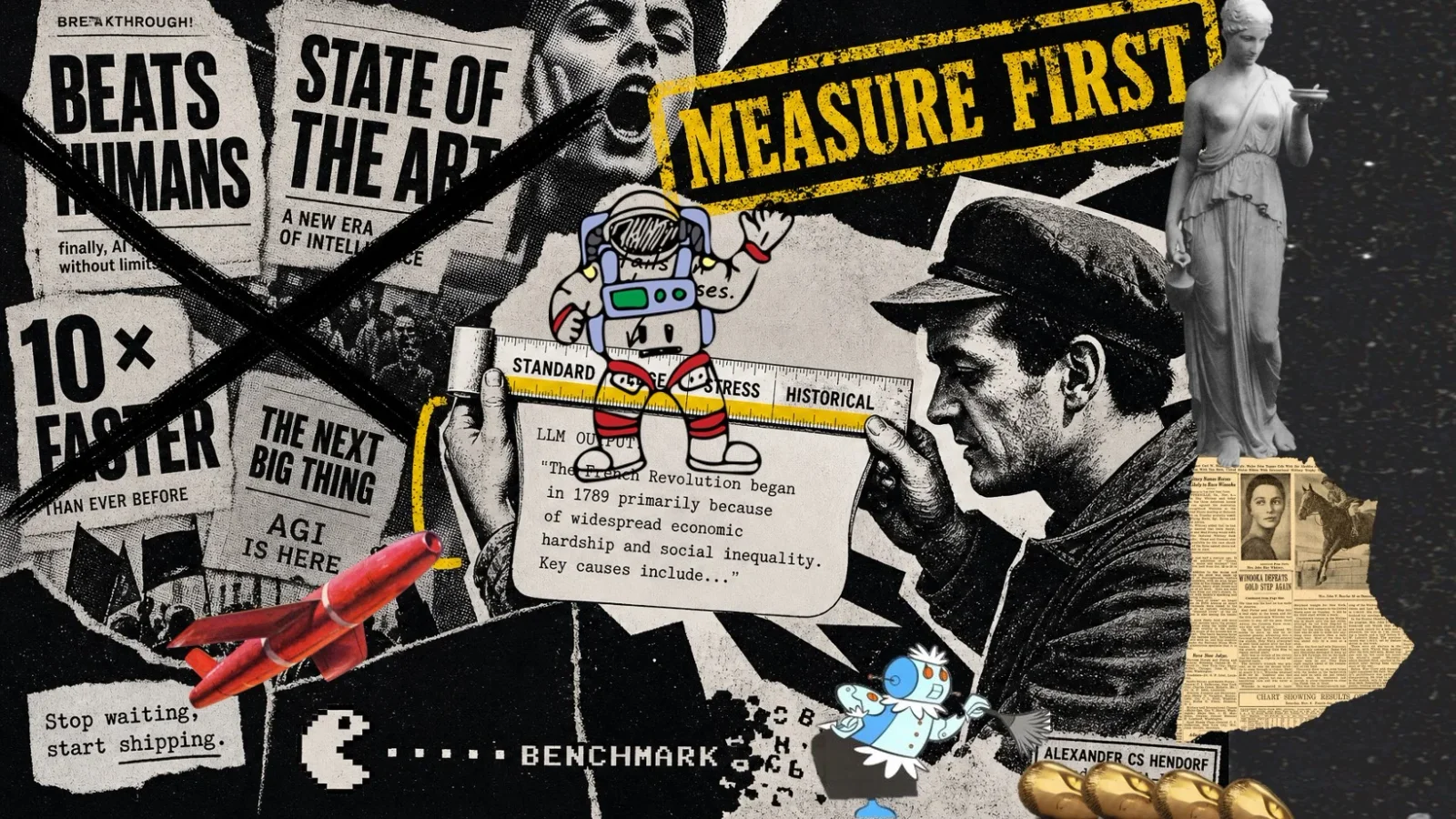

The most important architectural decision for LLM systems in 2026 is not the model. It is the evaluation.

That was one of the clearest lines running through the talks at PyCon DE & PyData 2026:

Productive teams don't win because they deploy the most spectacular model. They win because they measure earlier, more continuously, and more domain-specifically.

Where eval discipline was thought through from the start, 2026 has systems that hold. Where evaluation was defined as a downstream phase, the teams are often still on their first version. Not a law of nature — but a consistent pattern across multiple talks.

In 2026, evaluation is not a testing discipline. It is an architectural decision — and the one that shapes every other.

Three Shifts That Became Visible in 2026

Continuous, Not One-Off

The view that evaluation is a step at the end ("we have the model, now let's evaluate it") doesn't hold up in 2026. In the talks describing serious production work, evaluation was a continuous pipeline: with every model swap, every prompt change, every new dataset. The effort only pays off once it is already built in. Programmes that don't do this from the start mostly fail to retrofit it later.

Domain-Specific, Not Generic

Public benchmarks like MMLU or HumanEval have their value for model comparisons buried in the small print. For the question of whether a model holds up in a specific application, they are nearly useless.

Frank Rust and Thomas Prexl showed in their talk how productive LLM teams approach this: they don't start with architecture, they start with 100 real user questions, correct answers and reliable sources. That set is what improvement is measured against. Cheuk Ting Ho drilled through the same logic methodically in the eval workshop Do you know how well your model is doing? — own tasks, own metrics, with LightEval as the tool.

These internal eval sets are often the most valuable asset of an AI programme in 2026 — they outlast every model change and define what "good" actually means.

Eval-Coupled Governance

The old architecture separated two layers: model evaluation on one side, compliance and governance on the other. The productive programmes of 2026 have collapsed that separation. Audit logs, drift detection, regulatory requirements, bias metrics — all run inside the same eval harness.

Andrei Beliankou and Evgeniya Ovchinnikova (E.ON) showed concretely what this looks like: three observability stacks in parallel (Langfuse, Opik, MLflow), tracing of individual spans, cost breakdown along prompt paths — and a typical production failure that any programme without this discipline runs into: competing eval checks (hallucination versus faithfulness) escalate against each other until the retry limit fires — and afterwards no one can say why the answer got worse.

Alejandro Saucedo (Zalando) put this into a wider arc: roughly half of organisations, according to the current Survey on the State of Production Machine Learning Operations, still have no productive ML monitoring in place. His takeaway after two decades of production ML experience: less hype around models, more robust operational, monitoring and governance structures. Anyone setting up a programme in 2026 that treats this discipline as ornamentation is repeating the mistakes of 2018 to 2022 — with larger models and higher costs.

In regulated industries this is not comfort but the precondition for a system to be released at all.

The Hierarchy of Solutions — and Why Most Get Complex Too Early

Sebastian Raschka described in the fireside chat at the conference a sequence that gets walked the wrong way round in many programmes: anyone building an LLM-based solution should test the simplest form first — load the relevant information into the context, no further complexity, and see how far that gets you. That is the baseline. Only when it doesn't suffice is retrieval-augmented generation worth it. Only when that doesn't suffice either is fine-tuning worth it. Knowledge graphs come, if at all, last.

Most teams reverse this. They jump straight into fine-tuning because it is technically interesting. Or they build knowledge graphs because that looks like control. Or they discuss MCP integrations without ever checking whether a simple CLI access solves the same task.

This is not expensive because of the extra work. It is expensive because eval discipline blurs: anyone who starts with fine-tuning can no longer say cleanly whether the improvement came from fine-tuning or from something context management would have delivered just as well. The baseline is missing — and with it the basis on which every further investment can be assessed.

Hiring Profile: Domain Expert Willing to Experiment

When a conference attendee asked who a "manager with budget and a sovereignty mandate" should hire, Raschka answered in a way that gets lost in DACH reality: not primarily ML specialists. You need people who have worked with these systems enough to develop intuition — and who are willing to try things out.

"A domain expert who is willing to delegate the boring stuff."

That is a different hiring logic from "senior ML engineer with five years of PyTorch". It shifts the focus to two qualities: deep understanding of the business process, and the willingness to pick up a tool that you can learn to operate well enough in two or three months. Anyone who accepts that the decisive ingredient is not ML depth but domain-anchored eval discipline opens up a different talent market — one that fits most mid-market and corporate programmes considerably better.

Eval Sets as a Strategic Asset

Models change — every quarter. Which means the strategic core of a programme is not the current model but the eval set: 200 to 2,000 real examples from your own business process, with answers a domain expert has signed off as correct. With this set, every new model can be measured against your own reality within hours. Without it, you depend on public benchmarks that are rarely relevant to your own question.

Measure — don’t guess requires building your own versioned eval set; otherwise, every model decision remains a matter of belief.

A robust composition stratifies by characteristic: typical standard cases, borderline cases (ambiguous inputs), historical difficulties (cases where domain staff themselves disagreed), deliberate stress tests (manipulated inputs, edge cases of the compliance requirement). 200 to 500 cases, roughly evenly distributed, cover most of the reality — the exact number follows the domain, not a rule of thumb. Quarterly maintenance; annotation stays a human responsibility.

From accompanying several AI programmes over the past year: programmes without their own eval set spend the first three months optimising things that can't be measured — and then realise they have no reference point for saying when it has got better. The build commits domain-expert capacity and needs supplementary engineering for versioning and pipeline integration. Even so, it is by some distance the most profitable investment in the first phase.

For Decision-Makers, This Means

First: No AI programme without your own eval set

Eval setup belongs in week one — owned by the business owner, not parked in the ML engineering roadmap.

Second: No fine-tuning without baseline evidence

Jumps into more complex layers need eval evidence, not enthusiasm — baseline, RAG, fine-tuning, knowledge graph, in that order.

Third: No governance concept without a technical audit trail

Compliance and eval belong in the same harness; without end-to-end observability, sign-off in regulated industries is not defensible.

Fourth: No hiring solely along classical ML profiles

Domain expert plus appetite for experimentation is an available, often cheaper, and for most use cases better-fitting profile.

Raschka's closing advice was drastic in its simplicity: try things out instead of planning too long. The talk title — Stop Waiting, Start Shipping — was the programme. Anyone starting in 2026 without eval discipline will not be buying themselves a shortcut in 2027.

Stop Waiting, Start Shipping!

Eval discipline decides what ships in 2026. Let's talk about how it should look in your programme.

Let's talkRelated links

- PyCon DE & PyData 2026 — Conference Programme2026-04

- Stop Waiting, Start Shipping: Real-World Strategy for Open-Source LLMs — Fireside Chat with Sebastian Raschka2026-04

- It Works on My Machine: Why LLM Apps Fail Users (Not Tests) — Thomas Prexl, Frank Rust2026-04

- Don't call your LLM too often! How to build your dialog graph with confidence — Andrei Beliankou, Evgeniya Ovchinnikova (E.ON)2026-04

- Do you know how well your model is doing? Evaluate your LLMs — Cheuk Ting Ho2026-04

- Production ML across 2015–2035: A Journey to the Past and the Future — Alejandro Saucedo (Zalando)2026-04

- Measuring Massive Multitask Language Understanding (MMLU) — Hendrycks et al.2020-09

- Evaluating Large Language Models Trained on Code (HumanEval) — Chen et al.2021-07

Observations from PyCon DE & PyData 2026 and from the fireside chat "Stop Waiting, Start Shipping" with Sebastian Raschka.