Overfitted Promises: AI in Coding Research – Hype vs. Evolution

Overfitted Promises: AI in Coding Research – Hype vs. Evolution

As a technology leader, who can you actually trust? What works with AI coding tools, what doesn't — and what does that mean for your engineering organisation?At a Glance

- The thesis: today's AI coding tools work more like alchemy than like established science — trial and error with black-box outputs.

- The good news: that isn't pejorative. Newton's alchemical research led to modern chemistry.

- The strategic point: you have to understand where AI genuinely makes you productive (boilerplate, bug fixes, documentation) and where it fails (architecture decisions, complex security, domain-specific logic).

- The consequence: not every team and not every task is equally suited to AI coding tools. Adoption needs strategy, not just enthusiasm.

For context: This piece is an opinionated vision piece, not a playbook. The technology moves so fast that it would be dishonest to lay down definitive best practices here. What you are reading is a hypothesis — and above all an argument for developing fresh evaluation frames, instead of relabelling existing software engineering routines as "and now with AI". The tools, the language, the use cases and the boardroom expectations are new — the lens we use to judge them ought to be new too. Anyone reading this critically and thinking along is in the right place. Anyone expecting a finished roadmap will be left dissatisfied — that is by design.

At neurons&neckar 2025 I put forward a provocative thesis: today's AI coding tools resemble alchemy more than modern science. But this is neither pure criticism nor hype. It is a sober stocktaking — and a look at how your engineering organisation should deal with it.

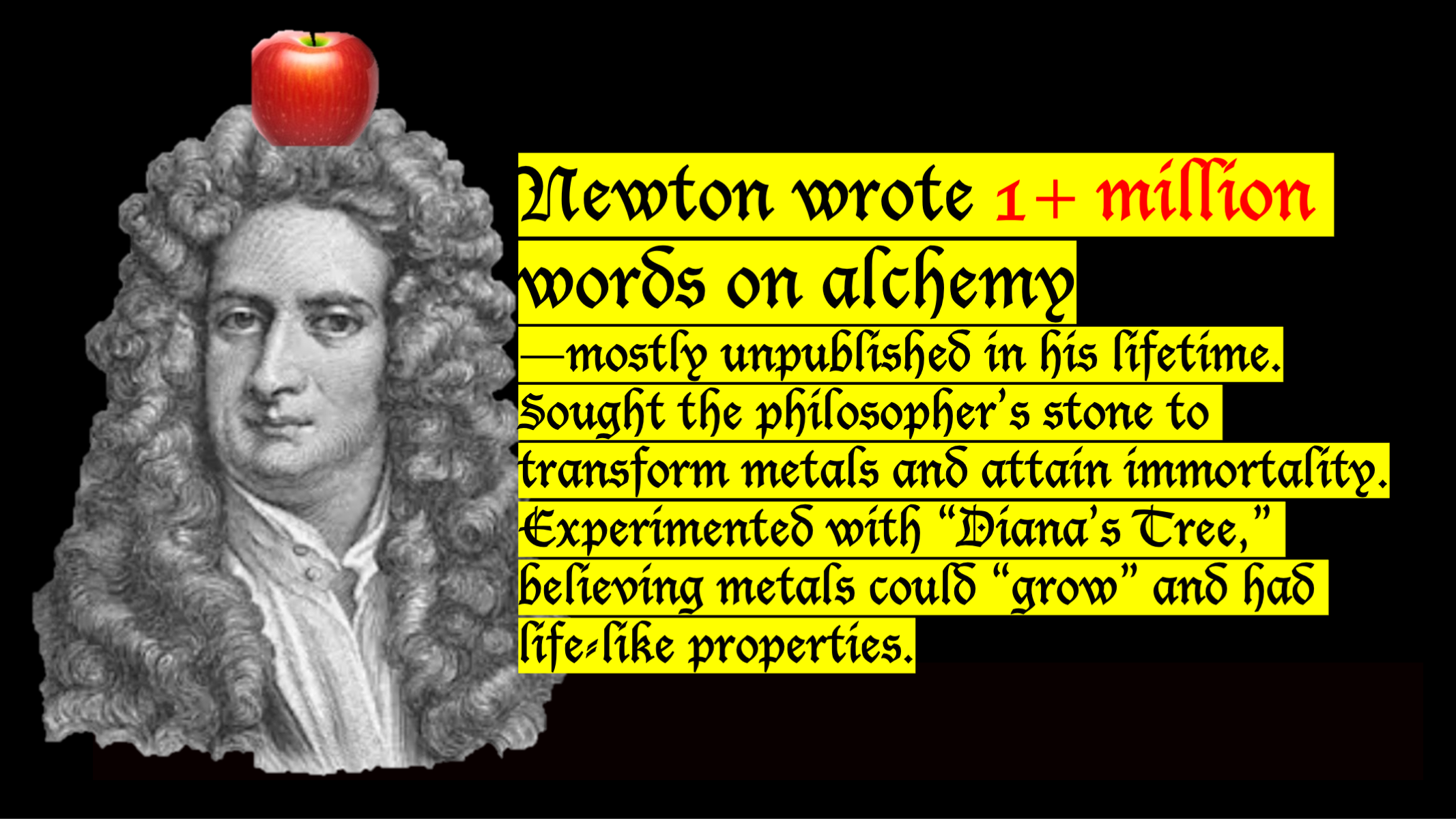

Newton: Between Alchemy and Revolution

Isaac Newton wrote more than a million words on alchemy — most of them unpublished in his lifetime. He was looking for the philosopher's stone, to transmute metals and to attain immortality. He experimented with "Diana's tree" and believed metals could "grow" and had life-like properties.

His verdict: he missed the goal. The consequences, however, were enormous. His alchemical experiments were methodical and meticulously documented. They led to scientific breakthroughs in chemistry, optics and thermodynamics. Newton needed alchemy in order to invent science.

That is the pattern I see today around AI coding tools: trial and error with an invisible mechanism — but not without value.

The Parallel: AI Coding Today

The problem I observe in companies: they roll out GitHub Copilot or Cursor, expect a 30 percent productivity jump, and are then puzzled when reality turns out to be more complex.

Today's AI coding tools work like alchemy, not like established science:

- They want the right words (prompt engineering), but the rule is invisible.

- They produce astonishing results on simple tasks, and fail on subtle requirements.

- Why a prompt works or doesn't cannot be explained systematically.

- You have to experiment, adjust, retry — trial and error.

AI coding is a new layer that we still have to learn how to handle and how to fence in.

But there is a pattern. And once we understand the pattern — once we know where these tools work and where they don't — we can deploy them deliberately.

Where AI Coding Tools Really Work — and Where They Don't

The good news: there is a pattern. Not every task is the same.

Where they consistently deliver

- Boilerplate and standard patterns: repetitive structures that look the same everywhere.

- Code completion and suggestions: intelligent auto-completion at 80 percent similarity to training data.

- Simple bug fixes: obvious mistakes (typos, wrong function calls, simple logic errors).

- Documentation and explanations: turning code into prose; explaining concepts to beginners.

- Trivial conversions: translating code between similar languages (Python ↔ JavaScript).

Where it gets alchemical — and you need to pay attention

- Architecture decisions: when you need microservices versus a monolith requires business understanding, not code generation.

- Security: vulnerabilities are often context-dependent. A generated token handler can look secure without being secure.

- Performance optimisations: subtle improvements need system understanding; AI can suggest variants but not understand the why.

- Domain-specific logic: banking rules, compliance, business logic — none of this can be fully grasped by an LLM from the outside.

- Debugging complex problems: when ten systems hang together and one isn't working, you need systematic thinking, not pattern matching.

A Strategic Example: The Agentic AI Problem

A second pattern shows up around AI agents (extended discussion in the post "Agentic AI"). Automated systems meant to act on their own need clear guard-rails. It is the same problem: humans have to define the limits of where the AI is allowed to act and where it is not. The AI itself will not get this right without human guidance.

The same applies to code generation: an AI cannot decide for itself whether generated code is "good enough" for production. It can iterate quickly, but the final call — the critical call — remains with humans.

The Evolution: From Alchemy to Science

Newton needed 50 years to get from alchemy to science. We will be faster. But we are not there yet.

The direction is clear:

- Better explainability: tools that show why a suggestion works (or why it doesn't).

- Specialisation: not one general LLM for everything, but specialised models for SQL, security, test writing and so on.

- Reproducibility: not a different result each time for the same query.

- Measurement instead of feeling: clear metrics for productivity uplift, not just "feels faster".

- Systematic quality assurance: not hoping the generated code is good — knowing it.

- New evaluation frames: the question "does the code work?" is not enough. We need methods that evaluate co-evolving human–AI systems — software engineering reviews from the pre-LLM era are a stopgap lens, not the right tool.

What This Means Strategically: Four Heuristics for the Transition

These four points are not best practices — the technology is too young, the material to distil best practices from is not there. They are heuristics that hold up in today's phase and deserve to be reassessed in twelve months.

1. Map, don't evangelise

Before you adopt AI coding tools, you have to know: which tasks dominate in our work? Are they 70 percent boilerplate (large opportunity) or 70 percent complex domain logic (limited opportunity)? No one-size-fits-all — different teams need different strategies.

2. Start with small pilots

Begin with a team that does a lot of boilerplate and bug-fix work. Measure: what becomes faster, what doesn't? Which bugs appear? Where do you need more reviews? Then scale only if the balance is positive.

3. Quality gates are not optional

Code from AI needs reviews just as good as any other code — only different. Not "does it work?", but "is the approach safe?" and "have we done it like this before, or is this new?". These reviews have to be done by your architects, not by junior developers.

4. Fence in the risk

Some codebases are too critical for AI experiments. Security, payment processing, core logic — AI-generated code does not belong there until the tools are more mature. That is not technophobia; it is risk management.

The Uncomfortable Truth — and the Opportunity

Today's AI coding tools are overfitted to their current applications. That means: they perform brilliantly inside their training corridor (boilerplate, APIs, simple patterns) and less well the further you move away from it.

This is not malicious. This is physics.

But: Newton's alchemy was also "overfitted" to the transmutation of metals. And yet it led to modern chemistry, to thermodynamics, to optics. Not because the original question was right, but because he experimented and learned systematically.That is what is happening with AI coding today. We are in the "alchemical" phase. We are in the middle of discovery. The companies that understand this now — that encourage their teams to experiment systematically, that introduce quality gates, that learn where these tools belong and where they don't — will reap the benefits when the science arrives.

The others will later wonder why they lost so much time.

Conclusion: Tell Hype from Opportunity

I often hear in boardrooms: "We have to invest in AI" or "Everyone's already using Copilot." That is disorientation in the hype. The right question is not "do we need AI coding?" but "where does AI coding pay off economically in our organisation, and where does it add risk?"

The answer is different at every company. And if you are unsure — if you do not know how to make that assessment, which tools fit you, how to roll them out without creating chaos — that is exactly what I am here for.

Not every AI promise is a promise. Some are real opportunities. The difference is strategy — and the courage to look at the new with fresh eyes, instead of forcing it into the old lens.Unsure which AI coding tools actually pay off in your organisation?

Let's talk